@thom463s Can you confirm your Roblox Studio version? You can find this by going to File > About Roblox Studio

Hello, my issue has been fixed now!

It turns out my studio was outdated, but there wasn’t an auto update so I just reinstalled it.

Theoretically, yes. People would be able to reproduce any sort of sound they want using sine waves, and this would include swear words.

I’ve experimented in this field and wasn’t able to achieve any significant results though. This may be due to issues with the current audio API such as desynchronization, and potentially more things I was unable to uncover.

Here’s a chunk from my attempt at playing 440 pairs of sine waves that were supposed to cancel themselves out:

Would it ever be possible for Roblox to implement a way to record audio streams and replay them at later times? (Without exposing actual audio information other than length and pitches) This could have awesome use-cases such as in-chat voice messages, recording music, etc.

Hey, is there any distance limit to audio emitters? Trying to make this work for a rocket but it just cuts off at a certain distance away from the audio listeners.

There’s an adjustable DistanceAttenuation that lets you decide at which distances the audio gets louder, quieter, cannot be heard at all, etc.

The announcement thread for that new functionality explains it in more detail:

Yeah but the problem is no matter what we do the sound cuts off at a certain point. We’ve tried the distance attenuation and it still cuts off when it gets too far away from the listener.

Do you have StreamingEnabled turned on in the game? Maybe what’s happening is that the AudioEmitter is so far away that it’s being streamed out for the client, which would cause the sound to stop playing.

If that appears to be what is happening, try placing the parent object of the emitter into a Model and then set its ModelStreamingMode to Persistent so it is always loaded for the player.

Hey @panzerv1, we’ve been working on a new Instance type that incorporates a lot of the feedback you gave on the equalizer; keep an eye out for an announcement!

I’m not sure if this question has been asked already since there are a ton of replies, but why is the Volume property of AudioPlayer and AudioFader capped at 3? This is really limiting and feels extremely arbitrary. @ReallyLongArms

oh god that does not sound right

I try connecting a getpropertychangedsignal of rmslevel and peaklevel of audio analyzer but it doesn’t fire anything

level changed but not printed to console

It is run on client

It would be great if there an event specifically for tracking changes instead of using loop to check constantly.

why is the

Volumeproperty ofAudioPlayerandAudioFadercapped at 3? This is really limiting and feels extremely arbitrary.

@Rocky28447 it is a little bit arbitrary, but since the Volume property is in units of amplitude, making something 3x louder can have an outsized impact. With the Sound/SoundGroup Volume property going all the way up to 10x (and VideoFrame going up to 100x ![]() ), it was/is pretty easy to accidentally blow your ears out.

), it was/is pretty easy to accidentally blow your ears out.

If you want to go beyond 3x, you can chain AudioFaders to boost the signal further – but if that is too inconvenient we can revisit the limits.

It would be great if there an event specifically for tracking changes instead of using loop to check constantly.

Hey @lnguyenhoang42, PeakLevel and RmsLevel get updated on a separate audio mixing thread, and we didn’t want to imply that scripts would be able to observe every change, since they happen too quickly. Studio also uses property change signals to determine when it should re-draw various UI components, and frequently-firing properties can bog down the editor

add more effects like noise removal

I have a question, will it soon be possible to apply audio effects similarly to how we applied sound effects?

I’m making a voice chat system where if someone is behind a wall, their voice will get muffled. While rewiring one effect isn’t much of a hassle, the connections might start looking like spaghetti once more effects get added.

Instead of putting everything in a linear order, will it soon be possible to have a standalone effect and connect it to an emitter via a wire?

Here’s an image of what I mean:

Yeah, chaining three AudioFader instances really isn’t ideal for recreating the old behavior.

Also, I’d like to request an additional audio instance be added that allows for adjusting the volume of many AudioPlayer instances simultaneously. The use case for this is to recreate the behavior of SoundGroup instances. Multiple Sound instances can be assigned to a single SoundGroup and this allows for easy implementation of things like in-game audio settings for different types of audio, since the single SoundGroup can be used to adjust all Sound instances assigned to it.

Currently, AudioFader instances cannot serve this use case, as piping multiple AudioPlayer istances through a single AudioFader will stack them all on top of each other. We would need some instance that can output into an AudioPlayer and control its output volume (or just add this behavior to AudioFader).

My game has precise audio controls, which includes a “Master” control as well as controls for individual audio types, such as ambient FX, ambient music, UI sounds, and more. This was very easy to setup with the old system. I just created one SoundGroup called “Master” and then the other categories underneath it, and then assign Sound instances to their respective SoundGroup. Under the new system, recreating this behavior is a massive pain.

chaining three

AudioFaderinstances really isn’t ideal for recreating the old behavior

Since .Volume is multiplicative, if you set AudioPlayer.Volume to 3, you’d only need one AudioFader with .Volume = 3 to boost the asset’s volume 9x

I wonder if it would be more useful to have something like an AudioPlayer.Normalize property – is the desire for a 10x volume boost coming from quiet source-material?

Currently,

AudioFaderinstances cannot serve this use case, as piping multipleAudioPlayeristances through a singleAudioFaderwill stack them all on top of each other

SoundGroups are actually AudioFaders under the hood – so it should be possible to recreate their exact behavior in the new api. The main difference is that Sounds and SoundGroups “hide” the Wires that the audio engine is creating internally, whereas the new API has them out in the open.

When you set the Sound.SoundGroup property, it’s exactly like wiring an AudioPlayer to a (potentially-shared) AudioFader.

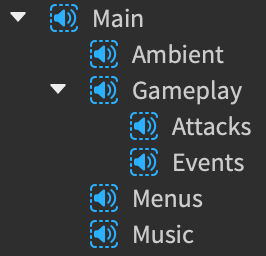

I.e. this

is equivalent to

Similarly, when parenting one SoundGroup to another, it’s exactly like wiring one AudioFader to another

I.e. this

is equivalent to

If there are effects parented to a Sound or a SoundGroup, those get applied in sequence before going to the destination, which is exactly like wiring each effect to the next.

Things get more complicated if a Sound is parented to a Part or an Attachment, since this makes the sound 3d – internally, it’s performing the role of an AudioPlayer, an AudioEmitter, and an AudioListener all in one.

So this

is equivalent to

where the Emitter and the Listener have the same AudioInteractionGroup

And if the 3d Sound is being sent to a SoundGroup, then this

is equivalent to

Exposing wires comes with a lot of complexity, but it enables more flexible audio mix graphs, which don’t need to be tree-shaped. I recognize that the spaghetti ![]() can get pretty rough to juggle – I used

can get pretty rough to juggle – I used SoundGroups extensively in past projects, and made a plugin with keyboard shortcuts to convert them to the new instances, which is how I’ve been generating the above screenshots. The code isn’t very clean, but I can try to polish it up and post it if that would be helpful!

Hey @MonkeyIncorporated – we recently added a .Bypass property to all the effect instances

When an effect is bypassed, audio streams will flow through it unaltered; so I think you could use an AudioFilter or an AudioEqualizer to muffle voices, and set it to .Bypass = true when there’s no obstructing wall. We have a similar code snippet in the AudioFilter documentation, but we used the .Frequency property instead of .Bypass – I think either would work in this case

Instead of putting everything in a linear order, will it soon be possible to have a standalone effect and connect it to an emitter via a wire?

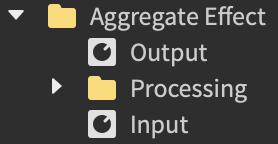

A trick that I’ve used is having two AudioFaders act as the Input/Output of a more-complicated processing graph, that’s encapsulated somewhere else

here, the inner Processing folder might have a variety of different effects already wired-up to the Input/Output. Then, anything wanting to use the whole bundle of effects only has to connect to the Input/Output fader(s)

Does this help?

Ah, I suppose that’s a bit better.

More so it’s just coming from a desire to not need an AudioFader where one wasn’t needed previously. Every additional audio instance in the chain requires setting up wires, so minimizing the need for extra instances where possible is ideal.

This image helps a lot. I was trying to apply the group fader before the AudioPlayer in the chain, which isn’t allowed. I didn’t think about applying the group fader between the listener and the output and creating a listener for each group using AudioInteractionGroup, but this makes a lot more sense. Thanks for the tip!

This works well for what I’m doing, thanks for sharing!